Secure AI Deployment Architecture

Most enterprise AI deployments begin with a focus on capability and speed to production. Security architecture is frequently addressed after the system is already running, when retrofitting controls is significantly more difficult and expensive than building them in from the start. NYCF works with organizations at the design stage to establish the security baseline that AI deployments require, and with organizations that have already deployed AI systems to assess and harden their existing configurations.

Model isolation is the first architectural principle. A language model API should not have unrestricted network access to the organization's internal systems. The blast radius of a successful prompt injection attack is directly proportional to what the model can reach: if the model can query any internal database, send emails from any account, and write to any file system, a single injection attack can have consequences across the entire organization. NYCF's architecture reviews establish clear isolation boundaries, identifying what resources the model legitimately needs to perform its intended function and recommending controls that prevent access to everything else. Network segmentation, least-privilege API credentials, and explicit allow-listing of permitted tool calls are the architectural controls that contain the consequences of a successful attack.

API gateway security controls the interface between users and the AI system. Input filtering at the gateway can reject or sanitize known injection patterns before they reach the model, though it should never be the sole defense since attackers will find patterns that evade filters. Rate limiting prevents resource exhaustion attacks and limits the throughput available to model extraction attempts. Authentication and authorization at the gateway enforce which users can access which models or capabilities, supporting access control policies that a prompt-level system instruction cannot reliably enforce on its own.

Input and output filtering are complementary controls that operate at the application layer. Input filtering pre-processes user queries to detect and block known attack patterns, flag unusually long or structured inputs that may be attempting multi-step attacks, and enforce content policies before the query reaches the model. Output filtering validates model responses before they are delivered to users or passed to downstream systems, checking for policy violations, potential data leakage, and content that might constitute an attack against downstream processing components. Neither filter is perfect, but together they significantly raise the cost of a successful attack and provide detection signals for security monitoring.

LLM Governance Frameworks and Access Controls

Governance for AI systems is a distinct discipline from the technical controls that secure them. An organization may deploy an LLM with excellent technical security but still face significant risk from uncontrolled use: employees sending confidential client data to a consumer AI service, AI-generated content published without review, models making consequential decisions in domains where human oversight is required by policy or law. NYCF helps organizations establish LLM governance frameworks that address both the technical controls and the operational policies needed to use AI systems responsibly.

Access control for LLMs must address more dimensions than access control for conventional applications. It is not sufficient to control who can access the AI system. Organizations must also control what data the model can access, what tools it can use, what topics it can address, and what it can produce. Role-based access control policies for AI systems need to account for the probabilistic and generative nature of model behavior: a user with access to a model that has access to sensitive data is, in a meaningful sense, a user with access to that sensitive data, even if the model does not directly expose it through normal interaction. NYCF reviews existing access control policies for AI systems and recommends controls that enforce the intended access boundaries at the technical level rather than relying on the model's training to respect policy.

Monitoring and audit logging for AI systems require purpose-built solutions. Conventional application logs capture API calls and error states. AI monitoring must capture enough information about model inputs, retrieved context, tool calls, and outputs to support incident detection and post-incident analysis, without creating new data retention risks by logging sensitive content in full. NYCF designs logging architectures that record sufficient detail for security monitoring while applying appropriate redaction and retention controls to the log data itself.

Data loss prevention for AI deployments addresses the risk that users will input sensitive information into an AI system that is not authorized to process it, and the risk that the AI system will output sensitive information that should not be shared. DLP controls for AI systems are distinct from traditional DLP because the channel is natural language: structured patterns like credit card numbers or Social Security Numbers are the easy case. The harder case is detecting when a user is asking a model to summarize confidential documents they pasted in, or when a model is producing responses that reveal sensitive information through inference rather than direct disclosure. NYCF designs DLP controls and policy frameworks specifically for AI interaction channels.

AI Asset Inventory and Risk Classification

All AI systems in use across the organization are catalogued, classified by risk level and data access, and evaluated against the organization's existing governance policies.

Access Control Policy Design

Role-based access policies for AI systems are designed to enforce intended data and tool access boundaries at the technical level, not through model instruction alone.

Monitoring and Logging Architecture

Logging and monitoring systems are designed to capture sufficient detail for incident detection and post-incident analysis while applying appropriate redaction and retention controls.

DLP Controls for AI Channels

Data loss prevention policies and technical controls are designed specifically for natural language AI interaction channels, addressing both input and output data risks.

Policy Documentation and Governance Program

Governance policies, acceptable use standards, and review procedures are documented to support compliance programs and provide evidence of AI risk management activities.

AI Risk Assessment and Threat Modeling

AI risk assessment applies structured threat modeling methodologies to AI systems, identifying the specific attack paths, failure modes, and business consequences relevant to each deployment. This is not a generic checklist exercise. The risks of an AI system that writes marketing copy are categorically different from those of a system that answers employee HR questions, which are different again from a system that takes autonomous actions in production infrastructure. NYCF's AI risk assessment is scoped and tailored to the organization's specific AI deployments and their business context.

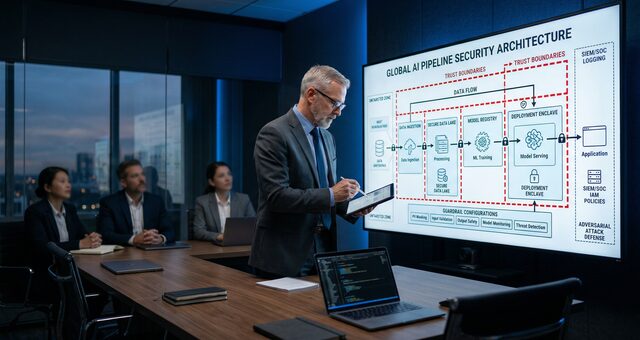

Attack surface mapping for AI systems begins with architectural documentation: what components exist, how they communicate, what data they process, and what external systems they connect to. From this foundation, NYCF identifies every input channel through which an adversary could attempt to influence the model's behavior, every data store from which the model could be induced to retrieve or disclose sensitive content, every tool or API the model has access to, and every output channel through which model-generated content reaches users or downstream systems. This mapping is a prerequisite for meaningful threat modeling and for scoping subsequent adversarial testing.

Threat modeling for AI systems identifies the realistic threat actors relevant to each deployment and the specific techniques those actors are likely to use. An internal corporate AI assistant faces different threats from an externally accessible customer service chatbot. A model used for automated decision-making faces regulatory threats that a purely advisory model does not. NYCF produces threat models that identify priority attack scenarios, assess their likelihood and potential impact, and prioritize the technical controls and governance measures that address the highest-risk findings. These threat models serve as both an engineering deliverable for the teams building and maintaining the AI system and a compliance document demonstrating that the organization has conducted the risk assessment that NIST AI RMF and ISO 42001 require.

Secure RAG, AI Supply Chain, and MLOps Security

Retrieval-Augmented Generation systems require security controls at every layer of the retrieval stack. The vector database that stores document embeddings must enforce access controls aligned to the underlying documents' permissions: a user who cannot access a confidential document should not be able to retrieve its embedding. NYCF designs vector database access control architectures that enforce document-level permissions through the retrieval layer, preventing cross-tenant data leakage and unauthorized information access through the RAG interface.

Embedding integrity controls verify that the content in the vector database reflects the authoritative versions of the source documents, not content that has been modified by an unauthorized party. NYCF designs integrity verification processes for knowledge base content, including hash verification of source documents, access logging for knowledge base write operations, and anomaly detection for unusual patterns of knowledge base modification that may indicate an embedding poisoning attack. Retrieval controls govern which documents are eligible to be retrieved for which users and in which contexts, preventing a user from crafting queries designed to surface content outside their authorized scope.

AI supply chain security addresses the full chain of model provenance from pre-training through deployment. Most enterprise AI deployments rely on base models from large providers, potentially fine-tuned by a third party or by internal teams, running on cloud infrastructure managed by another provider. Each step in that chain is a trust dependency. NYCF reviews the security practices of third-party model providers, evaluates the controls around fine-tuning data and processes, and assesses the security of the deployment environment including the infrastructure provider's isolation guarantees and the organization's configuration of that infrastructure. Model provenance documentation, tracking which model version is deployed and what its training lineage is, supports both security monitoring and regulatory compliance requirements that increasingly require organizations to document the AI systems they use.

MLOps security applies to the pipelines that train, evaluate, version, and deploy machine learning models. A CI/CD pipeline for ML has the same security requirements as any other CI/CD pipeline, plus additional requirements specific to ML workflows: access controls on training data repositories, integrity verification for datasets before they enter training runs, code review and approval requirements for training scripts that could introduce behavioral changes, model evaluation gates that must be passed before a new model version is deployed to production, and rollback procedures for returning to a previous model version if a deployment introduces unexpected behavior. NYCF reviews MLOps pipeline security against these requirements, identifies gaps, and recommends specific controls and process changes to address them.

Secure RAG Architecture

Vector database access controls, embedding integrity verification, and retrieval permission enforcement designed to prevent cross-tenant leakage and unauthorized knowledge base access.

AI Supply Chain Review

Evaluation of model provenance, third-party provider security practices, fine-tuning controls, and deployment environment security across the full model supply chain.

MLOps Pipeline Hardening

Security review and control recommendations for ML training, evaluation, versioning, and deployment pipelines, including data integrity verification and deployment approval gates.

AI Incident Response Planning

Documented procedures for detecting, containing, and documenting AI security incidents, including model rollback protocols and forensic preservation of AI system evidence.

AI Compliance Architecture: NIST AI RMF, ISO 42001, and EU AI Act

Regulatory requirements for AI systems are advancing faster than most organizations' compliance programs. The NIST AI Risk Management Framework was published in 2023 and has rapidly become the baseline reference for AI governance in US government procurement and private sector risk management. ISO 42001, also published in 2023, provides a certifiable management system standard for AI that follows the structure familiar from ISO 27001 information security certification. The EU AI Act entered into force in 2024, with obligations for high-risk AI systems taking effect on a rolling schedule through 2027. Each framework imposes distinct documentation and control requirements, and organizations deploying AI systems across multiple markets may need to satisfy more than one simultaneously.

NYCF's AI compliance architecture work begins with a gap assessment: identifying which frameworks apply to the organization's specific AI deployments, what each framework requires, and where the organization's current controls and documentation fall short of those requirements. This gap assessment produces a prioritized remediation roadmap that addresses the most significant compliance gaps first, with specific control recommendations and documentation requirements for each identified gap.

For organizations pursuing ISO 42001 certification, NYCF can support the full path from initial gap assessment through control implementation and documentation to readiness review. The ISO 42001 management system requires documented policies for AI governance, risk assessment procedures, control selection and implementation, internal audit programs, and management review processes. NYCF designs each of these program components to satisfy ISO 42001 requirements while remaining operationally practical for the teams responsible for running them.

EU AI Act compliance for high-risk systems requires technical documentation of the system's intended purpose, performance characteristics, training data, and testing and validation results, as well as ongoing monitoring and post-market surveillance. For organizations deploying high-risk AI systems in employment, credit, or other regulated domains, NYCF can design the technical documentation framework and monitoring program required for EU AI Act conformity, and can provide the adversarial testing documentation that the Act's robustness requirements demand. Paired with security testing from NYCF's AI security testing practice, the architecture and compliance documentation work produces a complete package that demonstrates responsible AI deployment to regulators, customers, and legal counsel.